CIEE High School USA Research

High School Exchange Students improve their English language skills on their program.

ENGLISH LANGUAGE PROFICIENCY CHANGES IN STUDENTS ON THE HIGH SCHOOL PROGRAM: BEGINNING OF YEAR COMPARED TO END OF YEAR

Academic Language Solutions joined with CIEE (Council on International Educational Exchange) to study the progress that high school students who come to the USA on a semester or academic year exchange program make in improving their English language skills during the program. This report was published in September 2023.

Introduction

It is generally assumed that cultural exchange students participating in immersion programs will improve their language skills during the course of their program. Whether the visiting student is on a one-month, three-month or full-year experience, language skills will improve. That’s the general assumption, and we think it is a logical one. But has this ever been proven, other than with anecdotal evidence?

In 2017, we conducted research with university students coming to the USA on a three-month work experience and proved indeed that skills improved. And we examined sub-skills to see exactly which language skills improved in such a short time period.

Soon thereafter, CIEE (Council on International Educational Exchange), the largest student exchange organization in the USA, agreed to test younger students coming to America on a full year exchange student program. The COVID-19 pandemic delayed the execution of this research, and, in the fall of 2022, a cohort of incoming high school students were tested to create a baseline of skills that could be compared to similar skill scores achieved at the end of a year-long immersion program.

The incoming students were tested using the iTEP (International Test of English Proficiency) High School Exchange test. When the students took this test it measured grammar, listening and reading in 51 multiple-choice questions and it had a “mini-interview” which asked students to talk about their coming exchange experience. This interview was recorded and was available to appropriate parties.

They were administered the test during their first month in the United States. It would have been even more accurate if the students were tested before their arrival in the United States, but the students were given, for a variety of reasons, an alternative test before they came to the USA. This test, the ELTiS (English Language Test for International Students) was not considered to have the degree of accuracy necessary, nor did it provide sufficient sub-skill details allowing for full comparison at the end of the program.

We were able to create a comparison chart that aligned the ELTiS results with iTEP Exchange results. Upon applying the comparative values, we were able to draw some conclusions in comparing the two tests.

First, it was obvious that the ELTiS exam was not as strict in scoring as the iTEP exam. ELTiS scores were generally about 19% higher than iTEP in assessing the same skills. There are a few potential factors that account for this difference:

- ELTiS tests are often retaken by students to achieve a higher score on a test that is single or dual-form.

- We know of instances where tests are taken 3 or 4 times with the highest score being submitted.

- Since the majority of ELTiS tests are single form, exam content is often shared among students.

- ELTiS tests are administered and proctored by overseas agents who have a vested, financial interest in obtaining the best “passing” score possible.

Overall, iTEP is a more rigorous test than ELTiS. And while our intention in this study is not to compare results of the two tests, we would be remiss to not mention such an obvious and significant difference in testing levels.

Research methodology and results

Our intent in this study is to create a valid snapshot of how much students (15-18 years old) improve their English skills as a result of the immersion program of high school exchange. On this program, a student lives with a host family and is enrolled in a (usually public) local high school. The student participates in regular classes and participates in social and extracurricular activities at their school and community.

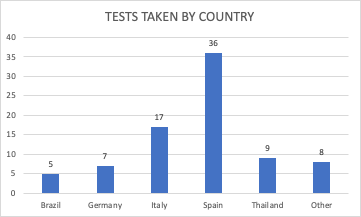

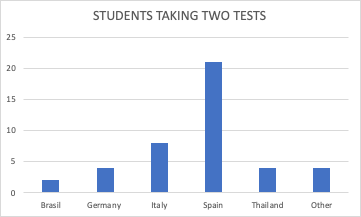

To achieve a good sample of students to study, Academic Language Solutions recommended that CIEE use 275 High School Exchange tests for this study. CIEE sent a mailing to students describing the project and soliciting student participation in the study. Nearly one-third of the students (33.1%) volunteered to participate in the study. These 83 students came from 10 countries, including Brazil, Germany, Italy, Spain, and Thailand. Czech Republic, France, Finland, Mexico and Norway were also represented, but for statistical purposes, have been grouped as “other”, as they had only 1 or 2 students each.

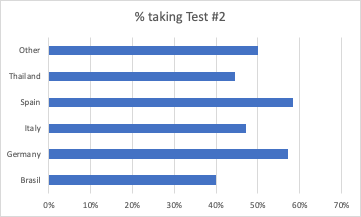

To determine progress in English skills, students needed to take a test a second time. Whereas the initial test (control group) was administered in the first month of the exchange, the group to be measured for change had their test administered in the final month of the 9-month exchange program. Students were incentivized to take the second test by having their name entered into a drawing for a gift certificate. Each of the five predominant countries had enough students from which to measure positive or negative change. 59% of the students from Spain took the second test. For the other countries: Italy (47%), Germany (58%), Thailand (45%), Brazil (40%), and Other (50%).

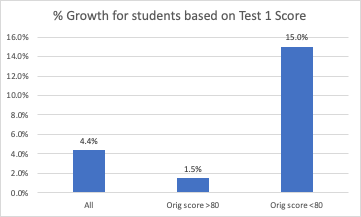

We were able to measure both overall and specific skills as a result of the scores on the second test. We were able to compare these results by country and by original score. We were curious to see how “weaker” students fared compared to stronger students. It would be expected that weaker students would grow in skills strength more on a numerical basis as there is more room for growth, but just how much stronger could the growth be, and how close to the stronger group would the weaker group end up?

We used a score of 80 as a break between weaker and stronger students. The weaker students grew in skills strength by 10.67 points, while the stronger group grew by only .33 points. Strong students continued to be strong but did not grow their English skills substantially in the exchange program. The weaker students, however, grew their English skill substantially on the immersion program. In fact, the progress for the weaker students was 15 times the progress of the stronger students.

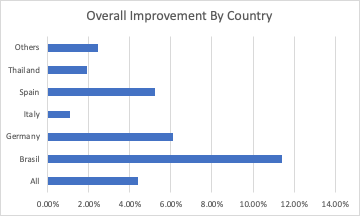

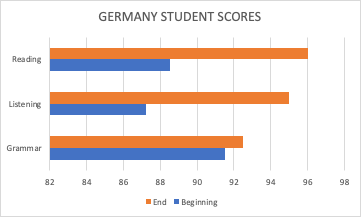

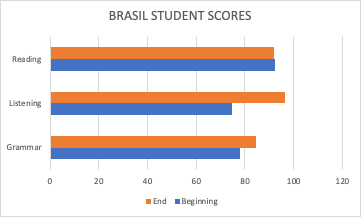

Which counties had the strongest growth over the course of the exchange program? From the chart below, you can see that the strongest growth occurred in the Brazilian students. The German students were second, closely followed by the Spanish students. The Others, Thai and Italian students had the lowest percentages of growth. There was no country that displayed no growth.

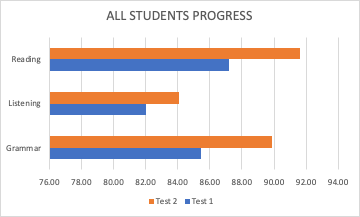

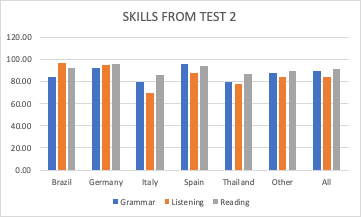

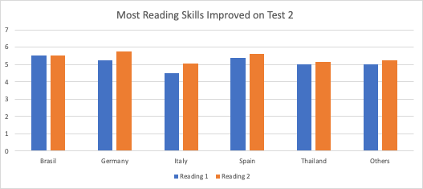

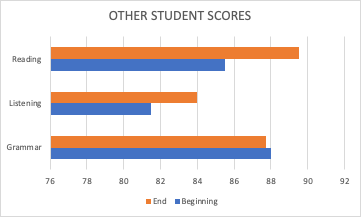

One of the strengths of iTEP exams is the ability to measure skills and sub-skills in addition to overall change. From the first test, you can see that the skills of the overall group of students were strongest for reading, followed by grammar, with listening being recorded as the weakest skill. Following an academic year in an immersion program, the skills remain ranked in the same order, but growth was seen in each skill.

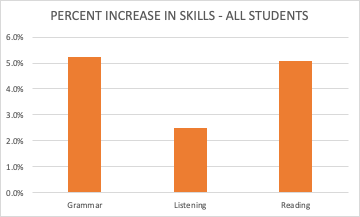

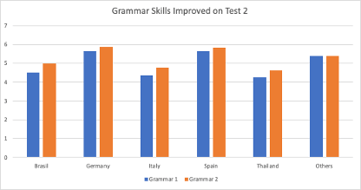

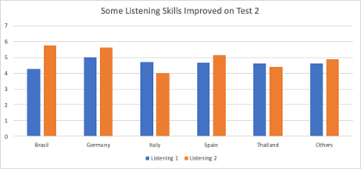

From the second chart below, grammar and reading progressed at about the same rate, while listening skills increased at about half the rate of the other skills. This is perhaps one of the surprises of the research. It could be thought that the constant immersion (with a host family, in a classroom, and from extra-curricular and social activities) would have improved the active listening skills substantially. While the skills did improve, they did not grow as strongly as expected.

Grammar and reading are more academic skills, and it could be concluded that the exchange program is as much an academic (based in a school) experience as a social experience. Before this research, anecdotal conclusions would not generally support that claim.

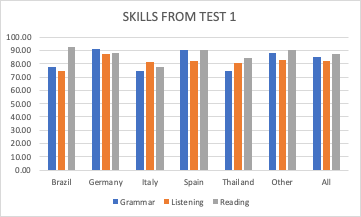

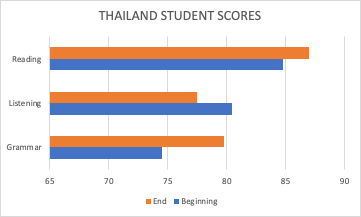

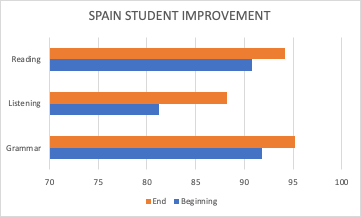

How did skills grow for students from different countries? If we look initially at the control (first) test results, we can see these skills broken out by country. Only with the Italian students did listening top reading, and grammar in scores. For Thai students, reading was their strongest skill, followed by listening and grammar. Only among German students was grammar stronger than reading and listening; in Spain, reading and grammar skills were equal. In most cases, reading topped grammar, which topped listening skills.

What happened with these skills after a year of academic and social exposure? Except for the students from Spain, after a year on the exchange program, reading was a stronger skill than grammar. Listening grew to be the strongest skill for Brazilian students. Overall, though, the relationship between the skills at the end of the year remained much the same as at the beginning of the program.

We can look at individual skills and see how they progressed in students from different countries. In the three charts below, you can see that skills generally improved across the board. The only drop we see is in listening skills for Italian and Thai students.

Now, let’s look at skills on a per-country basis. Again, we can see the strong growth for German students, particularly in reading and listening, and we observe the drop in listening skills for Italian and Thai students.

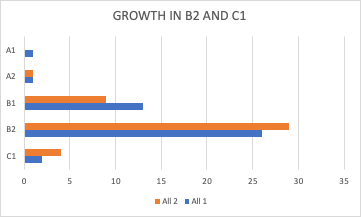

One area of skills we have not measured yet is how students fared when they scored on the all-important CEFR skills ratings. CEFR (Common European Framework of Reference for Languages) is a global scale measuring language acquisition proficiency. There are six levels of proficiency (from the bottom up: CEFR A1, A2, B1, B2, C1, C2). A2, for example, is a basic level of learning in which speakers can understand sentences and frequently used expressions often related to basic family and personal information, shopping, local geography, employment, etc., and can exchange simple and direct information on familiar and routine matters. Each level on the CEFR scale has its own functional description.

While an individual can be assigned a particular CEFR level, using this scale in terms of groups is difficult. Having said that, we have been able to measure growth on the CEFR scale for the individuals involved in this research testing. The chart below shows how levels have changed from Test 1 to Test 2. What you would be looking for in this chart would be a stronger presence of orange in the bottom part of the chart. You can see that the most activity on the chart occurs between B1 and B2. Growth from B1 to B2 seems to be the area of most growth, B2 being an “independent” category, just short of the C ratings labeled “proficient.”

Summary and Conclusions

Students on the high school exchange program did, as expected, improve in multiple areas of their English language skills. Our research studied specific skills in language assessment. We examined progress in reading, grammar and listening in addition to overall proficiency. We watched closely how skills changed relative to the CEFR (Common European Framework of Reference) scale. Some pertinent observations from the research project include:

- The students tested are taking part in an immersion program, living with a host family and attending a local high school.

- The students were between the ages of 15-18 years old.

- 1% of the students selected volunteered to participate in the first test in the study. 51% of those students took the second test in the study.

- 10 countries were represented.

- Scores tracked and compared included those from the ELTiS (English Language Test for International Students) and the iTEP (International Test of English Proficiency) exams.

- ELTiS scores were found to be about 19% higher than iTEP scores. We believe this to be a result of factors like retaking of tests, shared exam content, and faulty proctoring by parties with a vested interest in having students score higher. iTEP appeared to be a more rigorous test than ELTiS.

- Students were categorized into weaker and stronger groups based on results of the first test. A cutoff score of 80 (on a 100-point scale) was used to separate the groups. Weaker students improved their English 15 times greater than the stronger students.

- Reading and grammar improved at twice the rate of listening despite the immersion nature of the program. This was a surprising conclusion. Reading was the strongest skill at the beginning of the program and remained so at the end of the program.

- It is possible that the academic emphasis of the program outweighed the social aspect, and thus the results showing improved academic skills.

- In addition to showing skills growth according to iTEP scores, students as a group showed progress on the CEFR scale, with the greatest improvement being from Level B1 to Level B2, which is a strong level of independence in language use.

It is important to note that speaking skills were not measured in this research. At the time of the testing, the ELTiS test did not have a speaking section, and the iTEP exam had only an ungraded speaking section. Since then, the iTEP test has been upgraded to having the speaking section scored by trained ESL professionals. In October 2022, at the Council of Standards for International Educational Travel (CSIET) annual meeting, a research survey was presented that showed schools and sponsors both requesting a test that included graded speaking. Schools reported that students’ real proficiency did not match the ELTiS scores, and host families expressed concern that student proficiency was too low. With the introduction in November 2023 of the new iTEP Exchange test with graded speaking, sponsors, schools and families now have access to a more complete measurement of students’ English skills.

In summary, this research provides empirical evidence that high school exchange programs, and in particular, the CIEE program, contribute to significant improvements in language skills among participating students. Young people, especially those with lower to intermediate language skills, have improved their skills through participation in the high school exchange programs. This is consistent with past research that shows improvement in language skills on shorter-term work exchanges (another J-1 visa program).

Download This Case Study Today.

We only need your contact details to keep track of the downloads.